LLMs generate language. MemBrain provides everything else a brain needs — and every function talks to every other.

🧠

Memory Formation

Every AI response auto-extracts knowledge entries with semantic dedup — like a hippocampus for your AI stack. Past conversations enrich new prompts. Your organization builds long-term memory from every interaction.

🛡

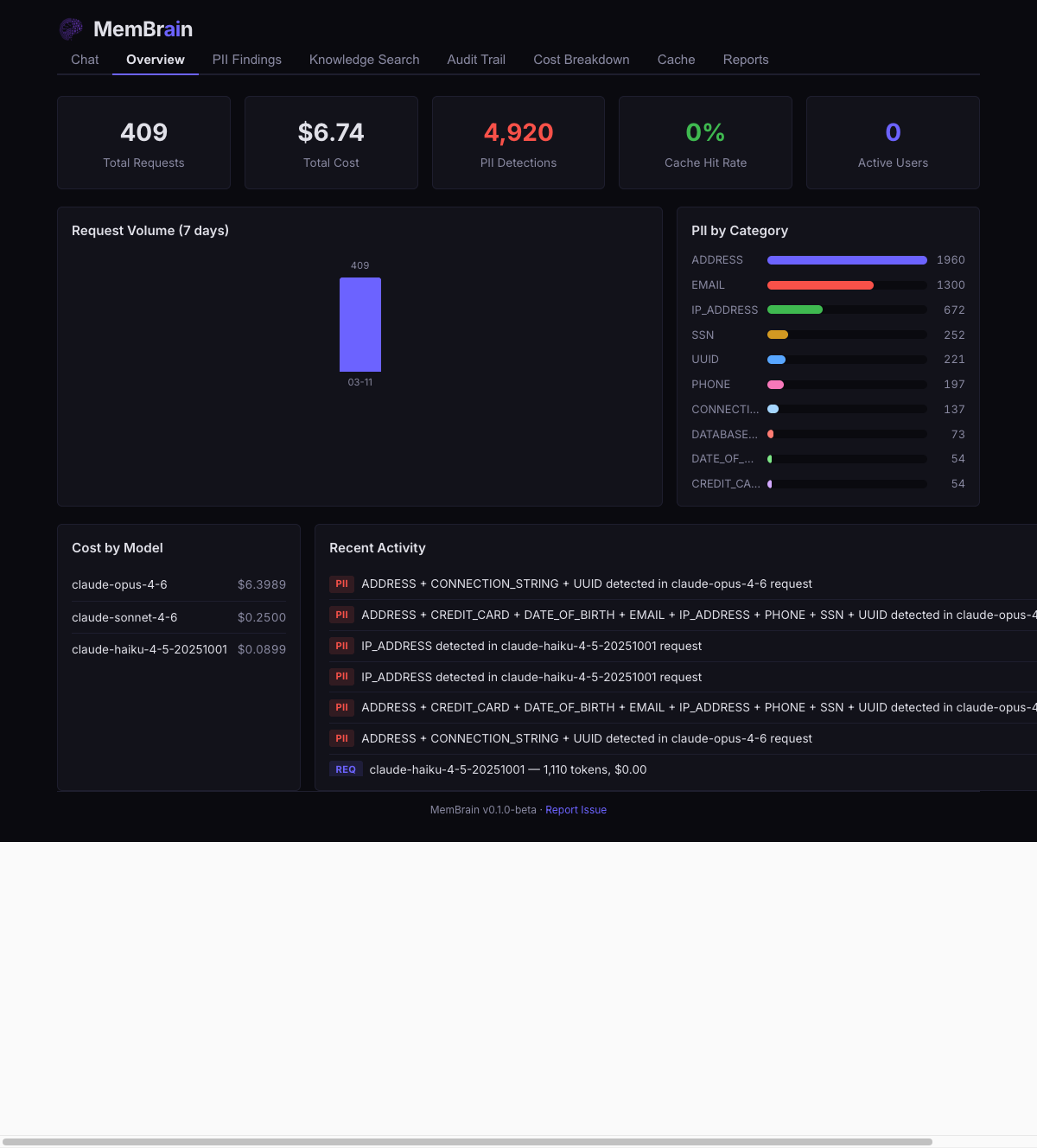

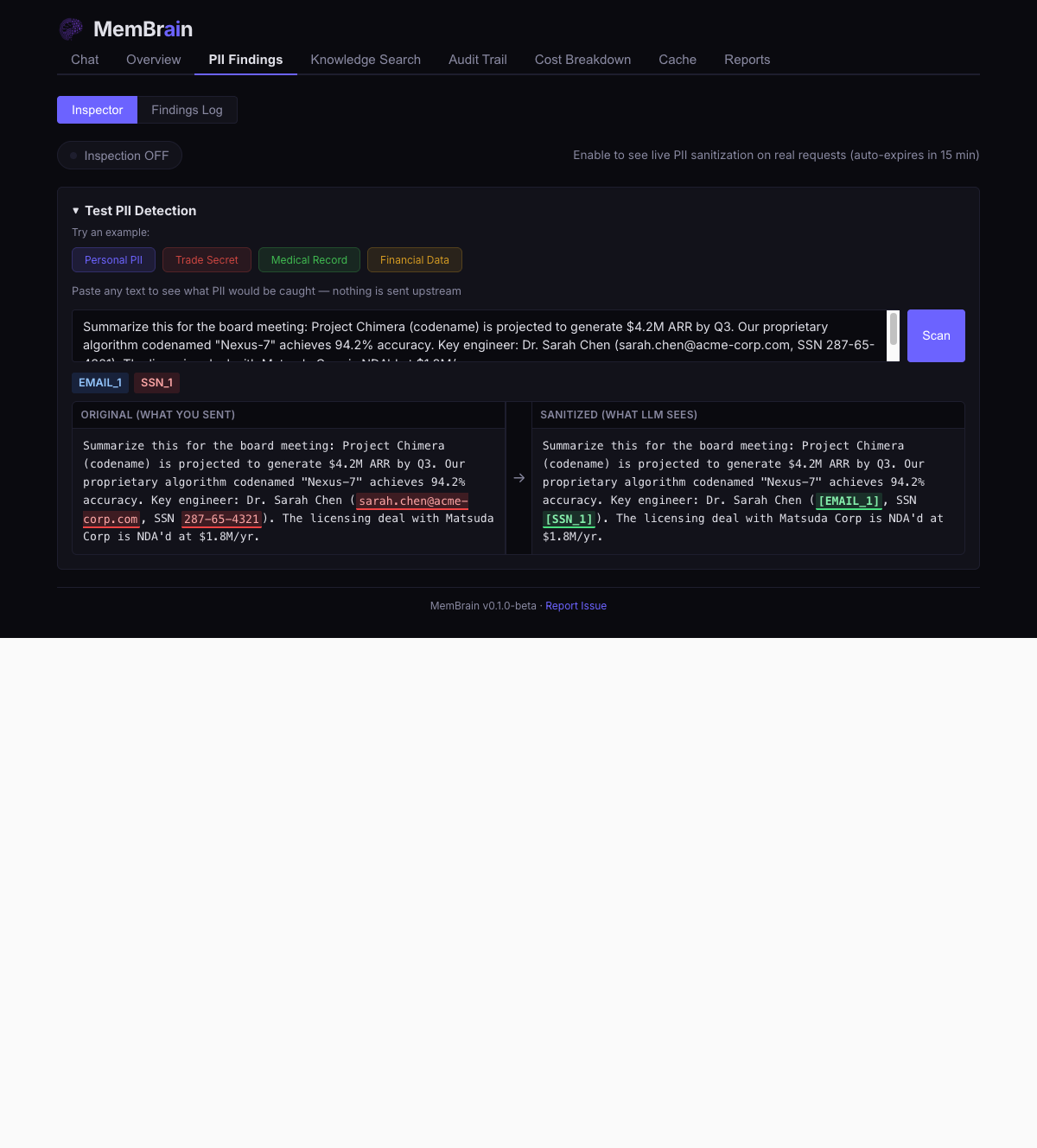

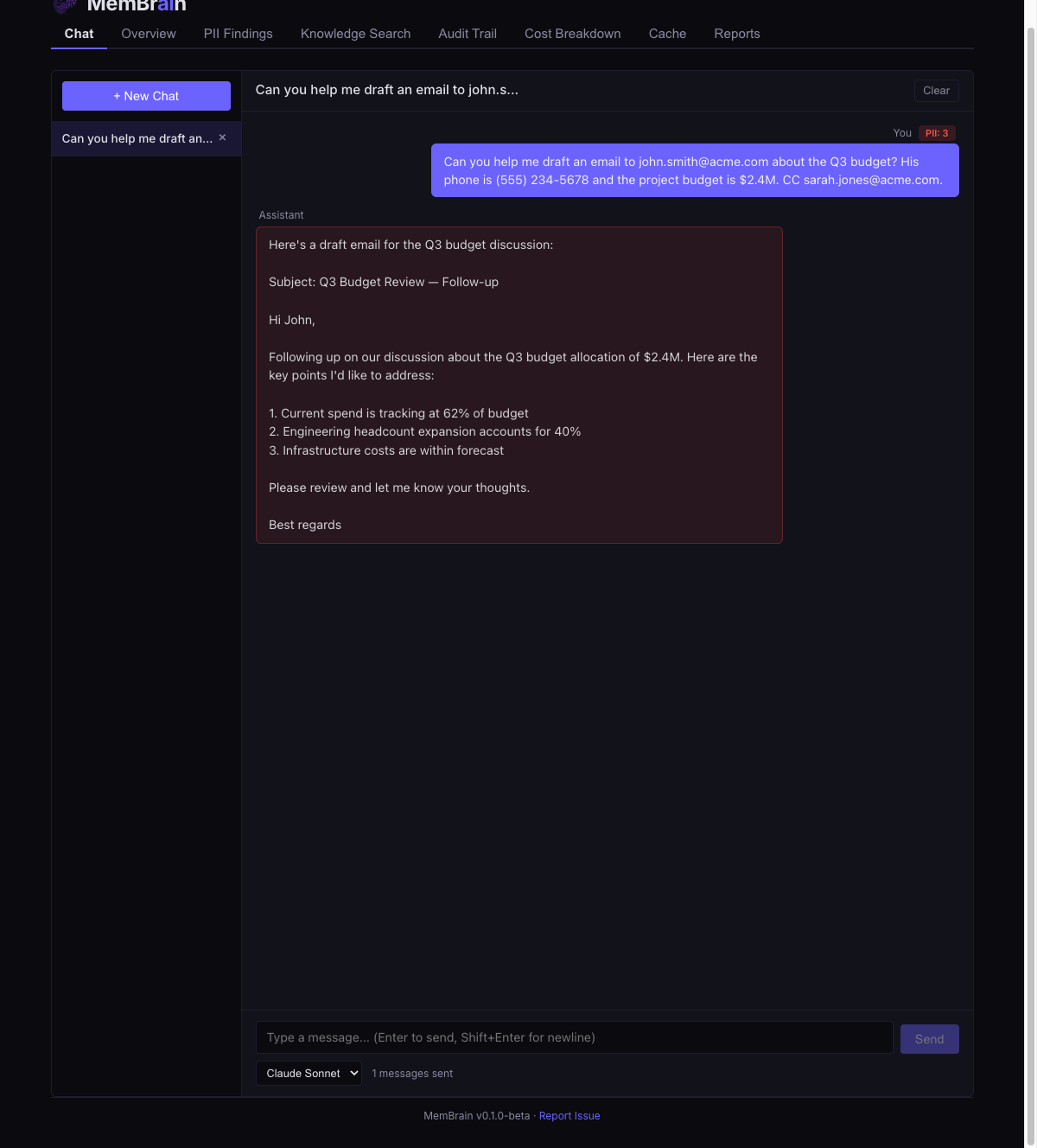

Threat Detection

25+ PII patterns plus ML NER fire on every request — an amygdala-like reflex that triggers before caching, before logging, before the LLM sees anything. Your cache keys and audit trail are always clean.

⚖

Judgment & Policy

Tool policy enforcement, rate limits, budget caps, and compliance rules. The prefrontal cortex of your AI stack — deliberate, rule-based decisions about what actions are permitted.

🔀

Signal Routing

Privacy-based routing sends sensitive requests to local models. Cost-based routing picks the cheapest provider that fits. Like the thalamus directing signals to the right brain region.

🌙

Memory Consolidation

A periodic background process replays your knowledge store — like sleep cycles for your AI. Marks stale entries, deduplicates near-matches, prunes low-quality memories. Your knowledge strengthens over time.

🔍

Semantic Recall

Not keyword search — pattern-matched recall. Relevant knowledge is found by meaning and injected as context into every prompt. The more you use AI, the richer the recall.